Neutral Atoms' Moment

What The Neutral Atom Surge Means for Quantum Computing

Recently, Google Quantum AI announced it is adding neutral atom quantum computing to its roadmap, hiring Adam Kaufman from JILA and CU Boulder to lead a new hardware team in Colorado. The news dropped just days ago and has already reshaped the conversation about the future of the field. Coming on the heels of Microsoft's deepening partnership with Atom Computing — a collaboration that has produced 24 entangled logical qubits and promises on-premises system deliveries — the move marks a striking inflection point. Two of the largest companies investing in quantum computing hardware have both concluded, within roughly eighteen months of each other, that superconducting qubits alone may not be enough.

This is not a small signal. And it deserves more than a press release readout.

The Time-Space Tradeoff

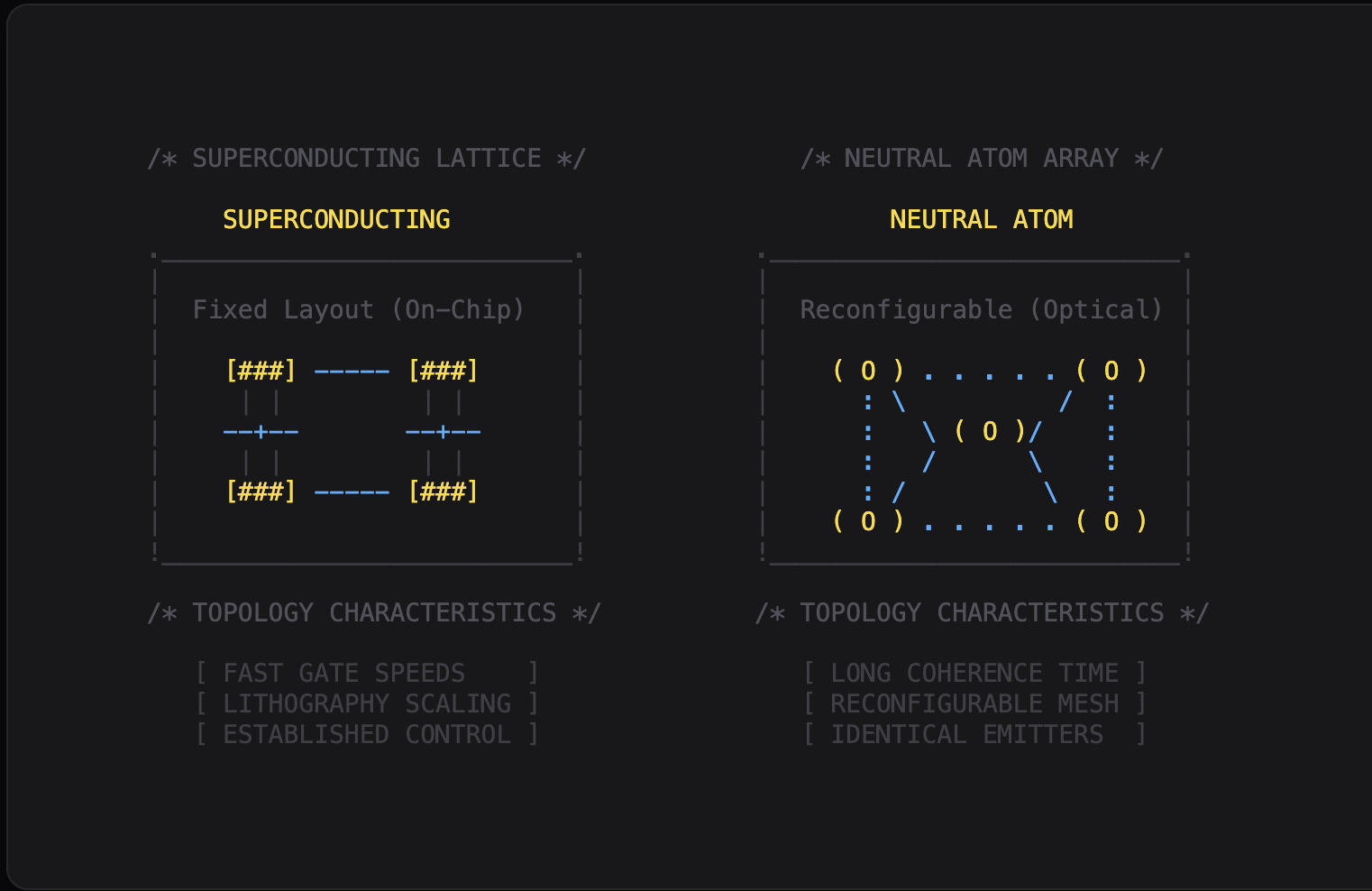

Hartmut Neven, who leads Google Quantum AI, framed the dual-modality strategy with a precision that cuts to the heart of the matter. Superconducting processors, he wrote, are easier to scale in the "time dimension" — circuit depth, gate speed, the rapid-fire execution of millions of operations at microsecond cycle times. Neutral atoms are easier to scale in the "space dimension" — raw qubit count, with arrays of roughly 10,000 already demonstrated in research settings, and any-to-any connectivity that makes qubits far more flexible to work with.

This framing is useful because it's honest about what each modality can't do. Superconducting systems can't easily scale to tens of thousands of qubits — every qubit needs microwave signaling leads that take physical space, introduce electromagnetic interference, and add thermal load to the dilution refrigerator. As I wrote in my forthcoming book, The New Quantum Era, current fabrication techniques will probably top out at maybe 10,000 qubits per fridge. Neutral atoms, meanwhile, can't demonstrate deep circuits with many cycles — their millisecond gate times are three orders of magnitude slower than superconducting operations. A sizable chemistry simulation on a neutral atom machine might take six months or a year to complete. That's a lot of time for something to go wrong.

Neither modality has produced what I've called the "transistor moment" for quantum computing — the breakthrough that would clearly resolve the race in favor of one approach. Google's move is an explicit acknowledgment that they don't expect one to arrive soon.

Why Error Correction Is Driving the Pivot

The real story behind these strategic shifts isn't qubit count or gate speed — it's error correction. And here, neutral atoms have a structural advantage that is becoming impossible to ignore.

When IBM published their quantum LDPC strategy in 2023, it was a brilliant theoretical result: 12 logical qubits from 288 physical qubits, maintained for a million cycles. But the 288 qubits were laid out in a biplanar chip architecture completely unlike anything IBM had ever built. As I discuss in my book, this means a delay of two to three years or more for their chip design, fabrication, and packaging teams to retool everything. IBM is in the process of making a biplanar chip with 144 qubits on each plane, with long-distance connections for error correction. That error correction only protects Clifford gates, so non-Clifford operations require a separate chip that distills magic states and teleports gates into the biplanar chip. It's admirable and remarkable, but it's also a measure of how much contortion is required to make a superconducting device do error correction while harnessing those fast gate speeds.

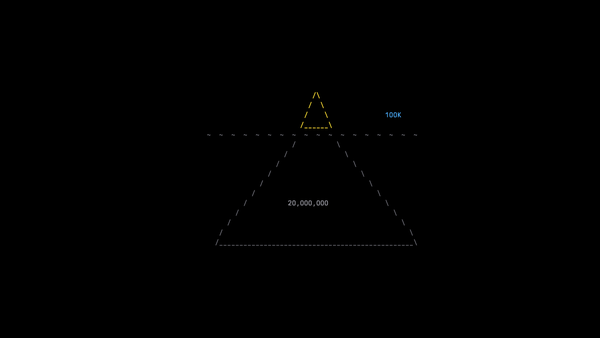

Then, in December 2023, Mihail Lukin and Vladan Vuletić did something extraordinary. Using their neutral atom array of 256 atoms, they realized 48 logical qubits following the broad strokes of IBM's LDPC technique. They built what was essentially a von Neumann machine floating in a lattice of lasers — special-purpose zones for entangling qubits, performing gates, storage, ancilla qubits, syndrome detection, and readout. All-to-all connectivity made this natural. The "LD" in LDPC stands for "long distance," which is easy when you can physically move your qubits through space to entangle them, then move them out of the way while maintaining that connection.

When I interviewed Alex Keesling, CEO of QuEra, on The New Quantum Era podcast, he described how these devices work and where they were heading with digital gates for universal computation. The scientific results were already impressive — lattice gauge theories, explorations of exotic states of matter, Feynman's original vision of quantum simulation being realized. But the error correction result was what truly changed the landscape. The 48 logical qubits from 256 physical qubits implied that QuEra might deliver a machine capable of performing operations impossible to simulate classically. IBM's 12 logical qubits, by contrast, would be within the capability of a modern laptop to simulate.

Google saw this. Microsoft saw this. Now both are acting on it.

The Pure Plays: Validation and Threat

The capital flowing into neutral atom companies right now is staggering. In the span of a few months: QuEra secured over $230 million from Google Quantum AI, SoftBank, and NVIDIA. Infleqtion completed its SPAC merger and began trading on the NYSE under the ticker INFQ on February 17th, raising over $550 million. Pasqal announced a SPAC deal valuing the company at $2 billion, with expected proceeds exceeding $600 million.

When Dana Anderson joined me on the podcast to discuss Infleqtion's neutral atom work, he traced the lineage all the way back to the Bose-Einstein condensate work at JILA in the 1990s with Nobel laureates Carl Wieman and Eric Cornell. Anderson's father had worked with Enrico Fermi — the physics runs deep. What struck me about that conversation was his conviction that neutral atoms weren't just another modality competing for the same computational goals, but a platform with a much broader set of applications: clocks, sensors, accelerometers, RF receivers. Infleqtion's dual focus on computing and sensing, and their early traction with the Department of Defense and NASA, gives them a revenue story that pure computing plays don't have.

But there's an uncomfortable question embedded in all this good news for the neutral atom community. When your biggest potential customers start building in-house capability, it is both the ultimate validation and the beginning of a threat. Google invested in QuEra and hired Adam Kaufman to build their own team. Neven's blog post says Google will "continue to collaborate" with QuEra — language that is notably non-exclusive and leaves plenty of room for Google to develop proprietary advances. The historical parallel to cloud computing is worth considering: AWS validated the market for every cloud startup, then proceeded to build competing services.

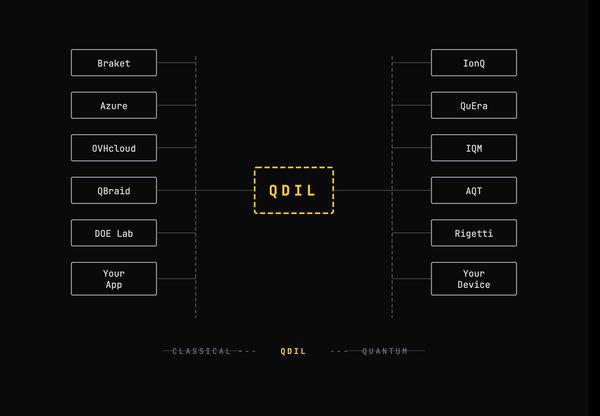

For Pasqal, the dynamics are slightly different. They've pursued a more independent path, deploying QPUs to HPC centers in France and Germany, available across Azure, Google Cloud, OVHcloud, and Scaleway. Their European sovereign compute positioning and diversified customer base give them insulation from the hyperscaler partner risk that Atom Computing and QuEra face more directly.

What This Doesn't Mean

It would be easy to read Google's announcement as an obituary for superconducting qubits. It isn't.

Google's Willow chip, with its 105 superconducting qubits, achieved something genuinely historic in late 2024 — the first experimental proof that surface code error correction gets better as you add more qubits. The distance-7 logical qubit outlived its best physical qubit by a factor of 2.4. That's the milestone the field had been chasing since Shor's first code in 1995. Their acquisition of Atlantic Quantum brings Will Oliver and his team into the fold and bodes very well for their future roadmap.

Of course, IBM remains entirely committed to superconducting through their roadmap to 2033, targeting quantum advantage by end of 2026 and a fault-tolerant Starling system by 2029. IQM and Rigetti keep plugging away, with IQM announcing its own move to go public. Alice & Bob, Nord Quantique, and many other pure plays keep advancing the state-of-the-art for the superconducting modality.

And superconducting qubits still have that enormous speed advantage. Orders of magnitude faster gate operations matter enormously for the deep circuits that practical applications will demand. As I argue in the book, I think superconducting qubits may ultimately overtake atom-based systems based on the knowledge that atom-based systems unlock. Neutral atoms may be the test bench that accelerates the design of other types of qubits by providing a proving ground for error correction and topologies.

But the speed advantage only matters if you can actually build the machine at the scale required to exploit it. And right now, the scaling question for superconducting systems is genuinely unresolved.

The Bigger Picture

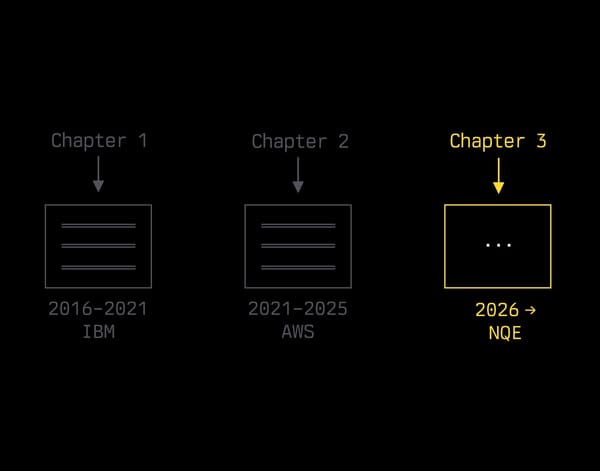

What I find most interesting about this moment isn't the technology — it's what it reveals about how the field is maturing.

For years, the quantum computing industry operated under a tacit assumption that one modality would win. The venture capital framing, the conference panel debates, the analyst reports — they all treated it as a horse race heading toward a single finish line. Google's announcement, and Microsoft's partnership with Atom Computing before it, suggest something different. We may be heading toward a landscape where different modalities serve different computational tasks, where hybrid architectures combine the speed of one platform with the scale of another, and where the "winner" is determined problem by problem rather than once and for all.

This is, in some ways, a more honest assessment of where we actually are. As I wrote in the final chapter of my book: we do not have a history of successful predictions for how technologies will be used. IBM's CEO thought there was a global market for five computers at one point. Every qubit that is fabricated, every attempt to find an algorithm, every time an experiment combines classical code with quantum circuits, it's like another packet of hope launched into the dark.

The neutral atom surge is real, and it's backed by serious money and serious science. But the history of quantum computing is littered with modalities that had their moment in the sun — NMR qubits produced fascinating science before terminating their forward progress, a reminder that momentum is not destiny. The question isn't which qubit will win. It's whether any of them can cross the vast gap between laboratory demonstration and industrial-scale computation.

Google just placed a big bet that two approaches are better than one for getting there. Given the scale of the challenge, they might be right.